Red Hat Documentation

Find answers, get step-by-step guidance, and learn how to use Red Hat products.

Explore popular products

Red Hat Enterprise Linux

Support application deployments—from on premise to the cloud to the edge—in a flexible operating environment.

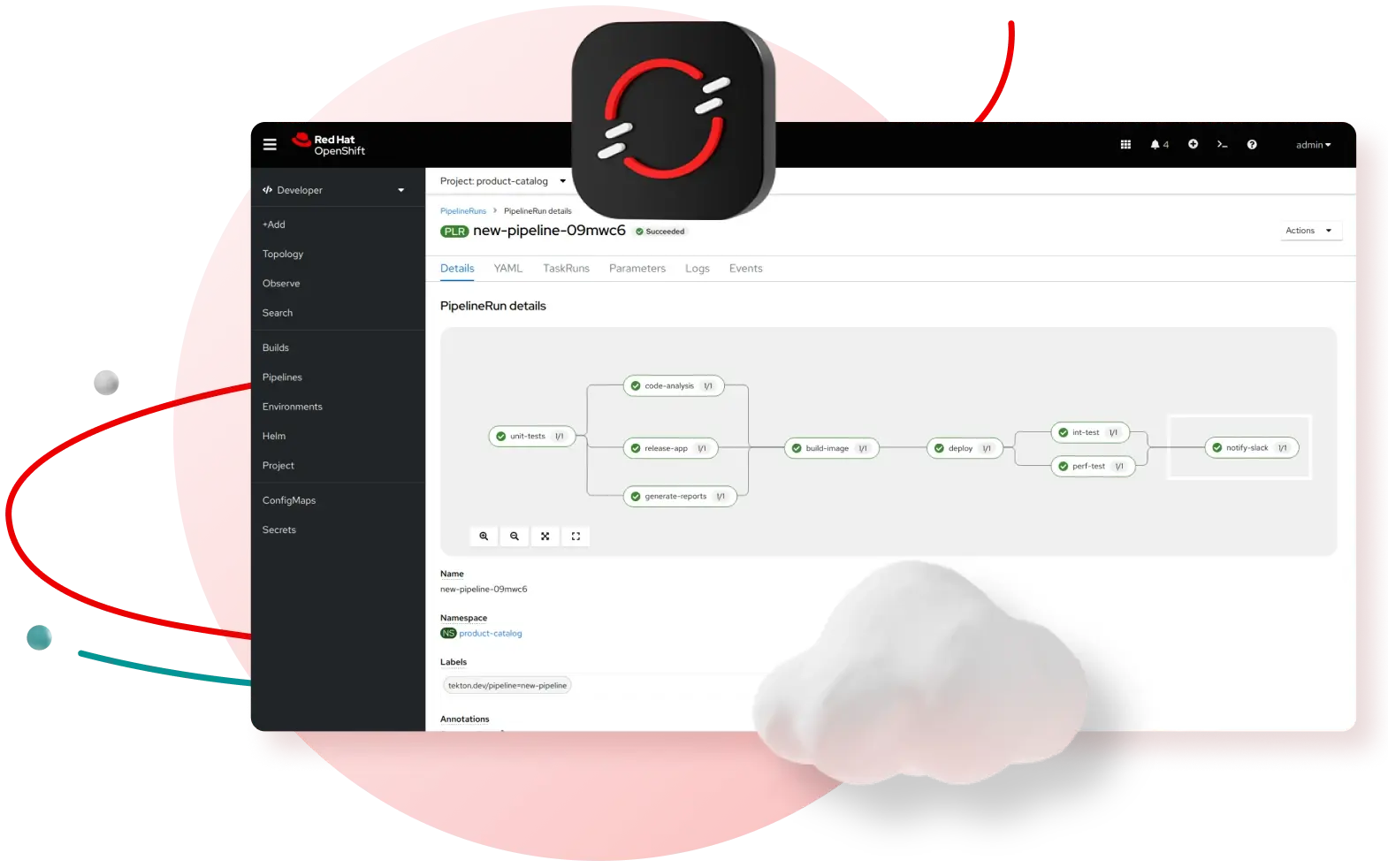

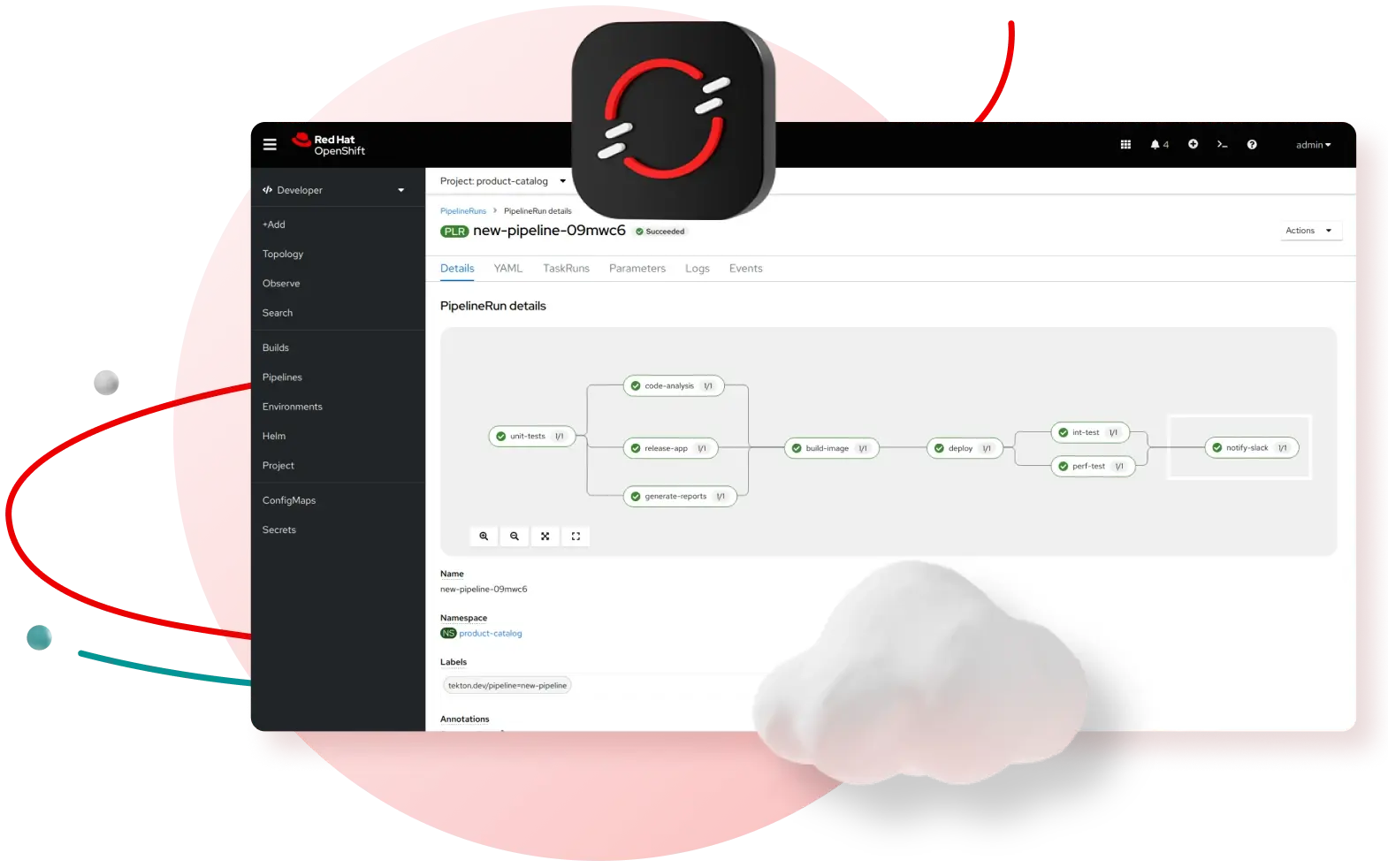

Red Hat OpenShift Container Platform

Quickly build and deploy applications at scale, while you modernize the ones you already have.

Red Hat Ansible Automation Platform

Create, manage, and dynamically scale automation across your entire enterprise.

Red Hat OpenShift Service on AWS

A fully managed service to build and manage your containerized applications on AWS.

Red Hat Enterprise Linux AI

Develop, test, and run Granite large language models (LLMs) on a foundation model platform.

Looking for something else?

Explore common use cases

Building your RHEL AI environment

Instructions on how to initialize and set up the RHEL AI environment

Getting started with Ansible Automation Platform

Get started automating for administrators, developers, and operators

Manage your VMs with OpenShift Virtualization

Run and manage virtual machines alongside container workloads.

Identify and resolve issues by using Red Hat Insights

Install Red Hat Insights to discover and predict risks, recommend actions, and track costs.

Learn about the Red Hat OpenShift Service on AWS (ROSA) with hosted control planes (HCP) deployment model

Install, access, and delete Red Hat OpenShift Service on AWS (ROSA) clusters that use hosted control planes

Building a hybrid cloud with Red Hat OpenStack Services on OpenShift

Deploy and manage virtualized and containerized applications in a scalable infrastructure with a Red Hat OpenShift control plane.

Improving Developer productivity with Red Hat Developer Hub

Install, configure, and customize Red Hat Developer Hub; an enterprise-grade platform for building developer portals.

Using image mode for RHEL to manage operating systems

Instructions for using image mode for RHEL to build, deploy, and manage the operating system as if it is a container.

Red Hat OpenShift essentials

Complete common Red Hat OpenShift tasks with our curated resources, while taking your product knowledge to the next level. Find answers based on your environment and role.

Cloud services

Self-managed

Explore learning resources

Get started with Red Hat

Discover the value of your Red Hat products with these learning paths.

Red Hat Developer

Learn how to build flexible and reliable applications with Red Hat solutions.

Red Hat Hybrid Cloud Console

Build, deploy, and optimize workloads across the hybrid cloud with Red Hat.

Managed OpenShift tutorials

Red Hat experts share step-by-step tutorials to help maximize your cluster.

Red Hat Hybrid Cloud learning paths

Build on your Red Hat® Cloud Services expertise with these learning resources.

Interactive labs

Hands-on, interactive lessons based on real use cases with Red Hat products.

Customer Portal labs

Troubleshoot issues, identify security problems, and more with these labs.

Architecture Center

Showcase of examples and partner uses for building with Red Hat solutions.

Training and certification

Gain the knowledge to get certified and stay ahead of technology trends.